Adaptive User Room Assistant (AURA)

Project Overview

AURA is an intelligent AIoT device designed to monitor environmental and behavioral cues such as brightness, sound, motion, and posture to understand and adapt to user activities including studying, relaxing, sleeping, or being away. The system integrates low-cost sensors, embedded intelligence, and lightweight AI models to create a personalized and context-aware smart environment.

The project demonstrates how adaptive technology can enhance productivity, encourage ergonomic behavior, and support user well-being by responding dynamically to real-time conditions. AURA highlights the potential of AIoT technologies to transform passive living spaces into responsive, interactive systems that continuously learn from user behavior and provide meaningful feedback.

Meet AURA

An adaptive AIoT assistant that understands your environment and responds intelligently to your daily activities.

Why AURA Exists

I started this project after noticing how passive most rooms are. They only respond when we manually change something, even though our environment constantly affects how we work, rest, and focus. There is very little awareness of what is happening in the space or what the user actually needs in the moment.

Passive Living Spaces

Most environments stay static even as user activities and needs change.

Limited Environmental Awareness

Typical rooms do not interpret light levels, sound, motion, or posture.

Manual Adjustment Everywhere

Users are responsible for constantly managing lighting, comfort, and conditions.

Impact on Productivity and Health

Small environmental factors influence focus, ergonomics, and overall well-being.

System Capabilities

AURA continuously senses, interprets, and responds to environmental and behavioral signals to create an adaptive living experience.

Activity Recognition

Understands what you are doing by observing posture, motion, and movement patterns.

AURA interprets physical behavior to understand user intent. Instead of relying on manual input, the system detects patterns in movement and posture to determine whether the user is studying, resting, or inactive.

What it monitors

- Posture changes at desk or bed

- Movement frequency and intensity

- Presence and absence in the room

- Daily behavior patterns

Why it matters

The system can reduce distractions while studying, suggest breaks during long sessions, and support healthier routines.

Environment Monitoring

Tracks lighting, sound, and ambient activity in real time.

AURA continuously measures environmental conditions to understand how the room affects focus, comfort, and rest. Sensor data provides the foundation for intelligent adjustments.

System inputs

- Light intensity levels

- Ambient noise detection

- Environmental state changes

Smart Adaptation

Responds automatically to context and user behavior.

Based on detected activity and environmental conditions, AURA adjusts the room to support the current situation. The system reacts in real time and learns from repeated patterns.

Automatic responses

- Lighting adjustments for focus or relaxation

- Comfort optimization based on activity

- Context aware system behavior

- Personalized environmental control

User impact

The environment adapts without constant user input, helping maintain focus, reduce fatigue, and improve comfort during daily routines.

Behavior Analytics

Learns habits and reveals patterns over time.

AURA stores interaction data to identify trends in activity, environment usage, and behavior. These insights help refine system responses and show how routines evolve.

Daily activity trend

Insights provided

- Time spent studying or resting

- Consistency of daily routines

- Environmental conditions over time

- Behavior trend summaries

Engineering Implementation

System Overview

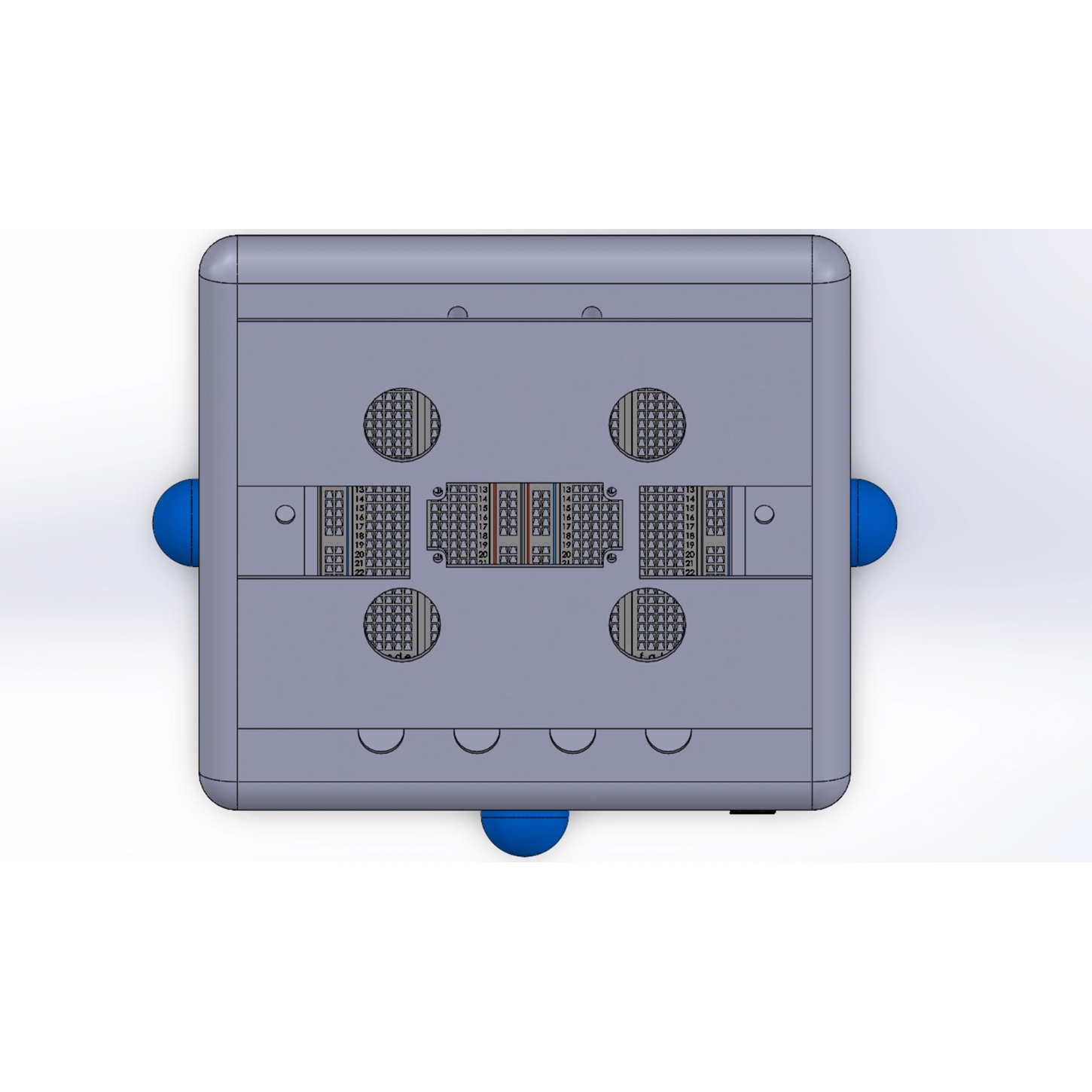

AURA is designed as an end-to-end intelligent system that connects the physical environment with adaptive digital intelligence. At its core, it bridges real-world sensor data with decision-making algorithms to create a system that not only reacts, but learns over time.

The device continuously observes its surroundings through embedded sensors, capturing signals such as light, sound, and motion. These signals are processed into meaningful representations of user behavior and environmental context, allowing the system to understand what is happening in the room without requiring explicit input.

Behind the scenes, AURA integrates embedded firmware, a backend processing layer, and a machine learning pipeline. Together, these components form a continuous feedback loop where data is collected, interpreted, and used to improve future responses.

Instead of functioning as a static smart device, AURA operates as a living system, one that evolves with usage, adapts to patterns, and becomes more aligned with the user’s habits over time.

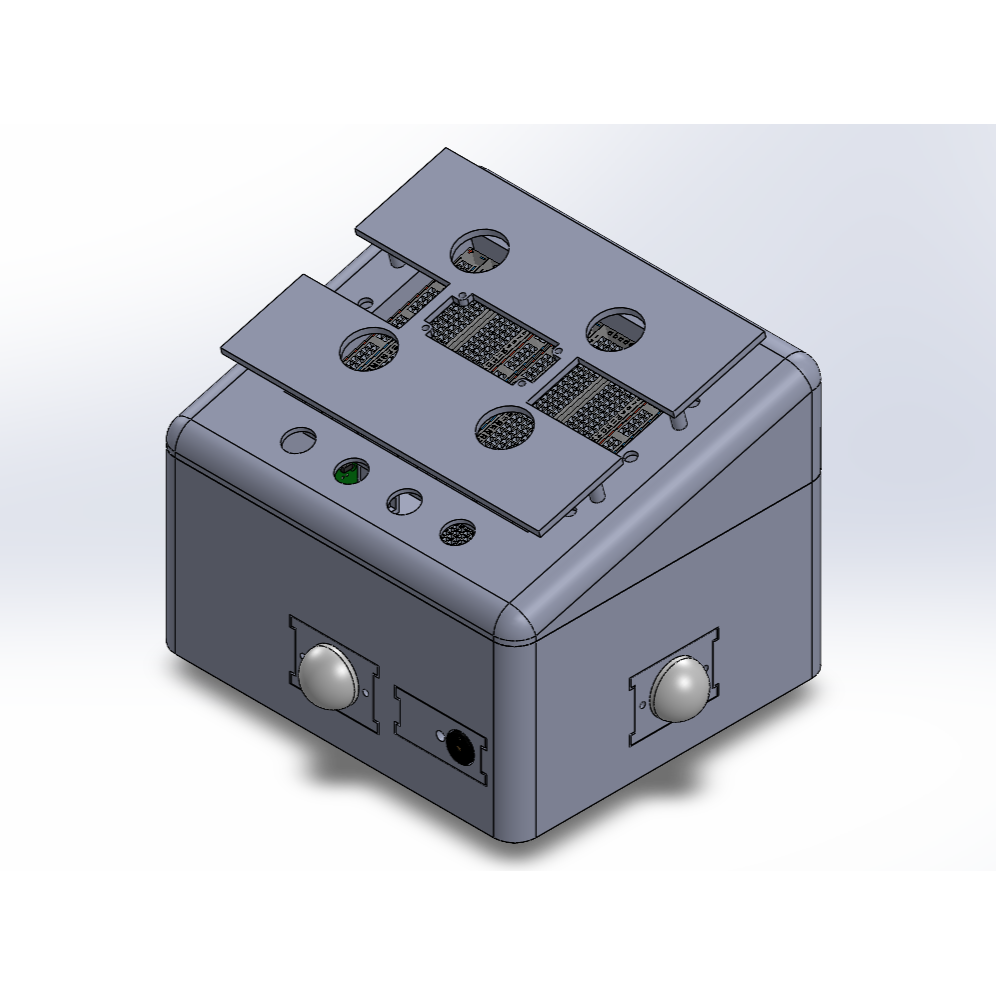

Initial CAD model exploring the overall form factor and front-facing interface layout

System Architecture

The system is composed of three primary layers working together to enable intelligent behavior.

Embedded System

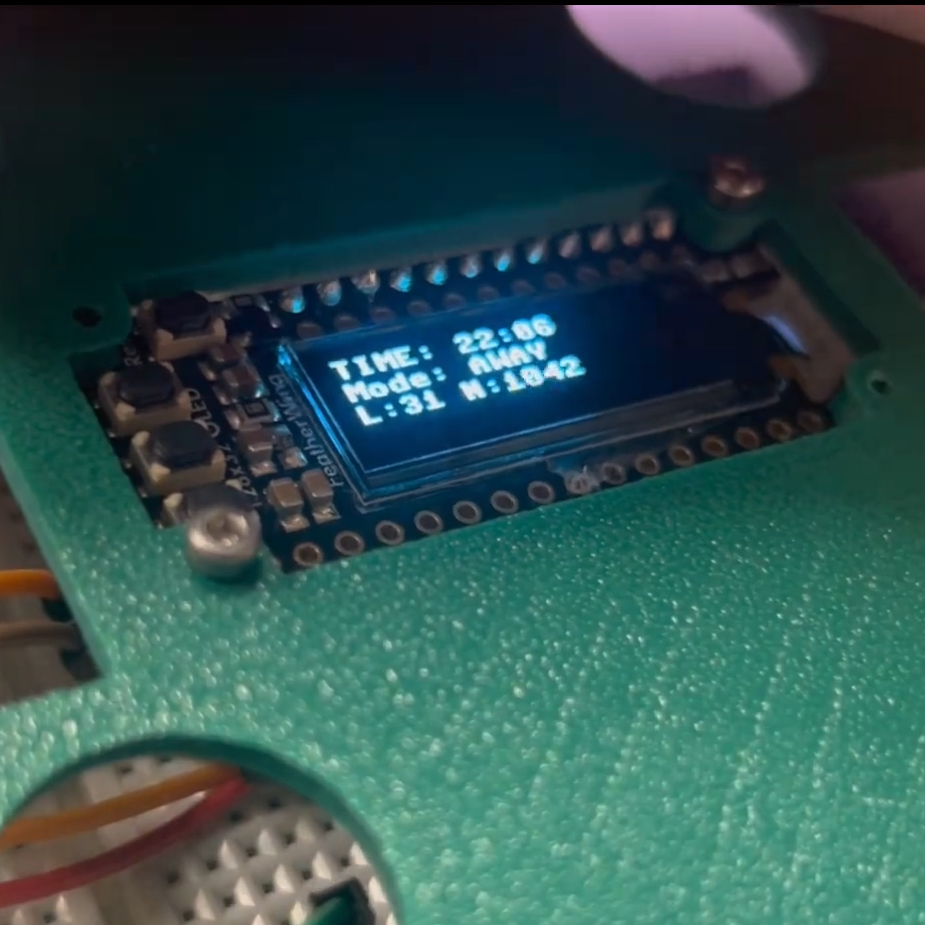

The embedded system serves as the foundation of AURA, acting as the real-time interface between the physical environment and the intelligent backend. Built on the ESP32, it is responsible for continuously sensing, processing, and responding to environmental changes.

Rather than simply collecting raw data, the firmware performs lightweight processing and decision logic directly on the device. This enables immediate responsiveness while minimizing latency and reducing reliance on constant cloud communication.

- Continuous acquisition of environmental signals (light, sound, motion)

- Local preprocessing and filtering of sensor data

- Device state management and behavior control

- Wireless communication with backend services

- Real-time user feedback through display and interaction modules

By combining sensing, local intelligence, and connectivity, the embedded system allows AURA to operate reliably in real time while remaining tightly integrated with higher-level learning and adaptation processes.

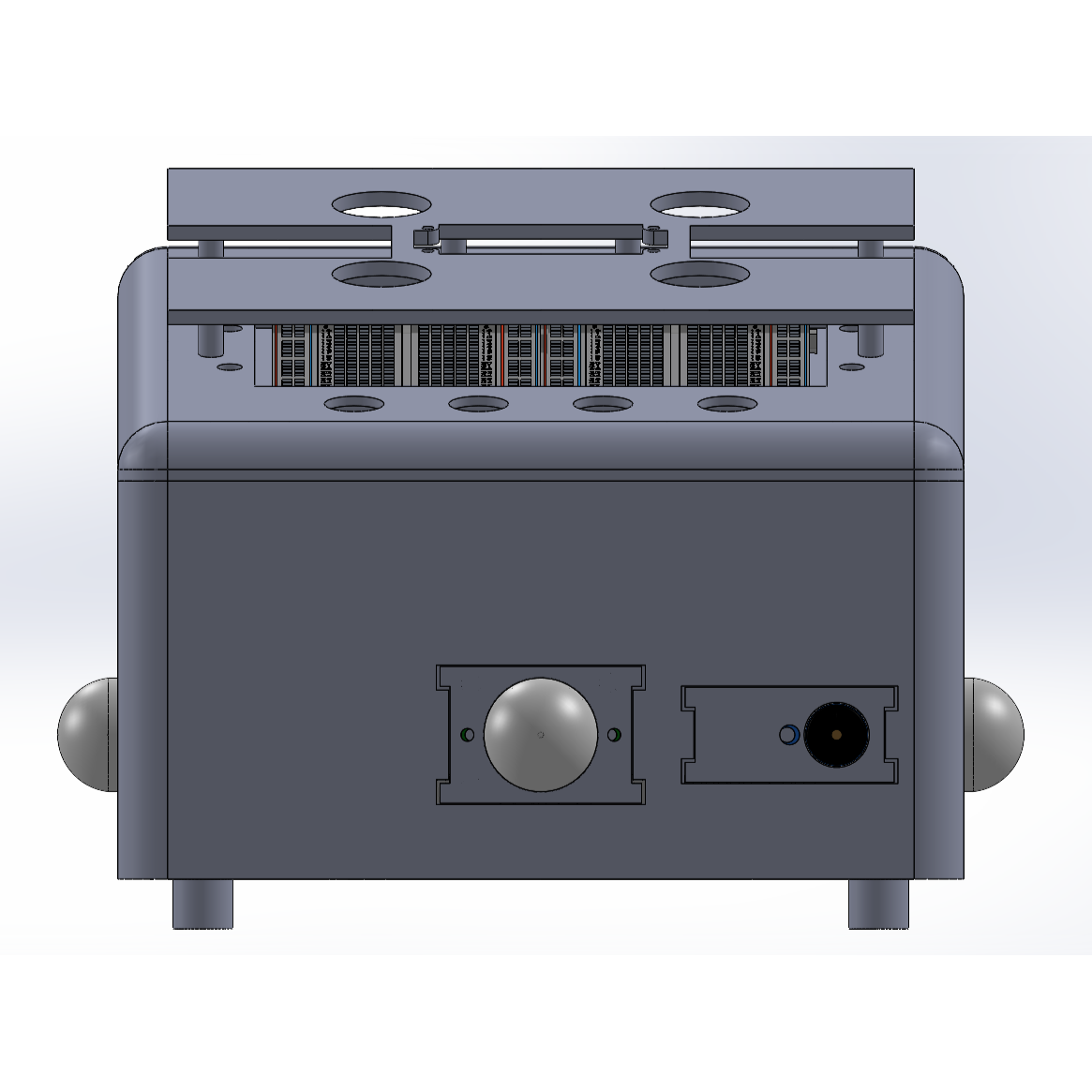

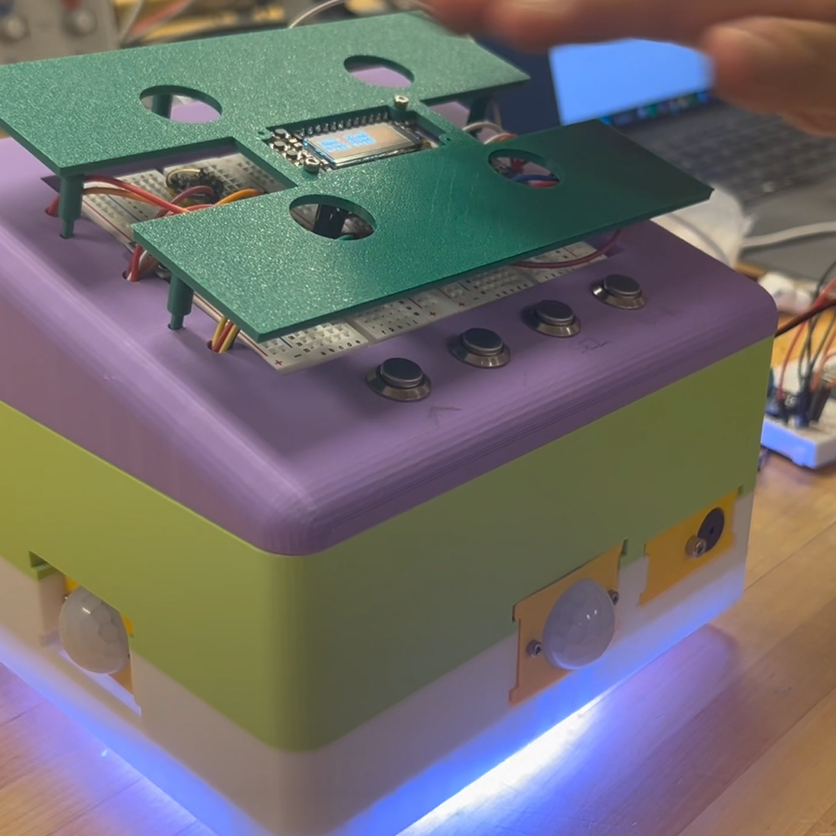

High-fidelity render of the final design vision, capturing materials, lighting, and intended user experience

Backend Infrastructure

The backend infrastructure acts as the intelligence layer of AURA, where raw device data is transformed into meaningful insights and predictive capabilities. It manages the full lifecycle of data — from collection and storage to model training and deployment.

This layer enables the system to move beyond simple rule-based behavior by learning patterns from historical data. It provides the computational resources needed to train models, evaluate performance, and serve predictions back to the device in real time.

- Centralized dataset storage and management

- Automated training and retraining pipelines

- Flask-based REST API for communication

- Scalable inference endpoints

- Experiment tracking and model versioning

Sensor Signals

Brightness

Sound

Motion

Data Windows

Time-series feature vectors

Activity Prediction

User state classification

ML Model

Multilayer Perceptron

Machine Learning Model

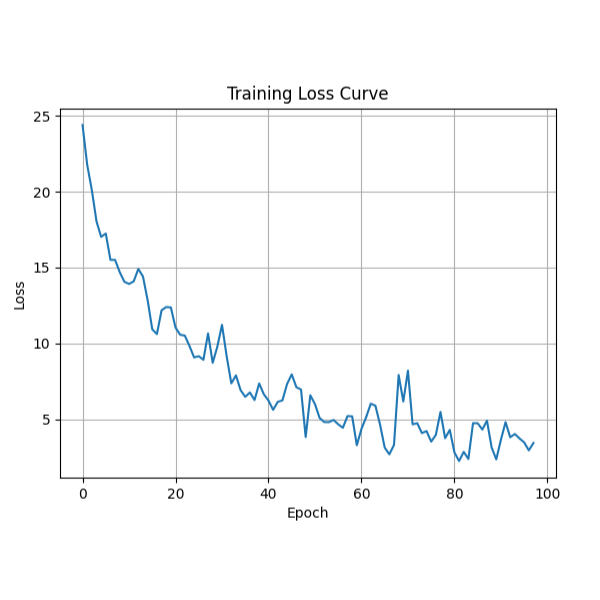

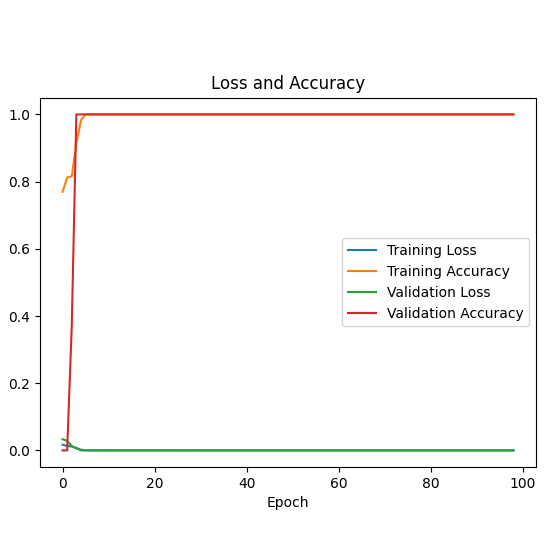

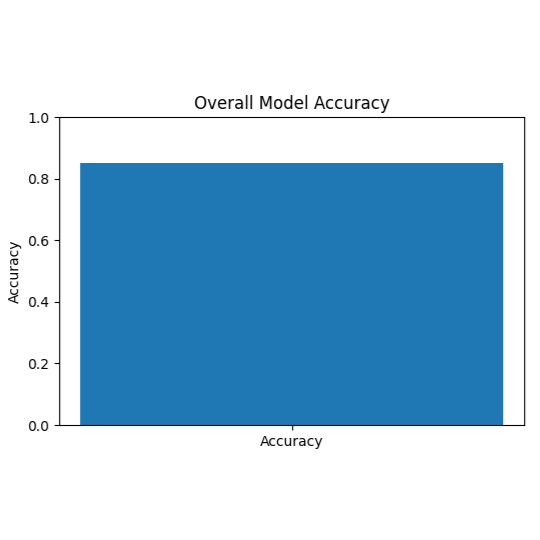

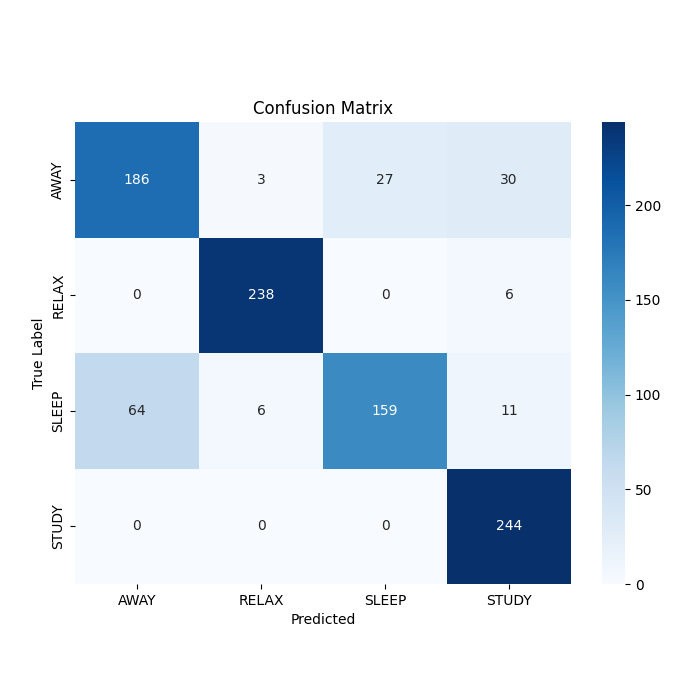

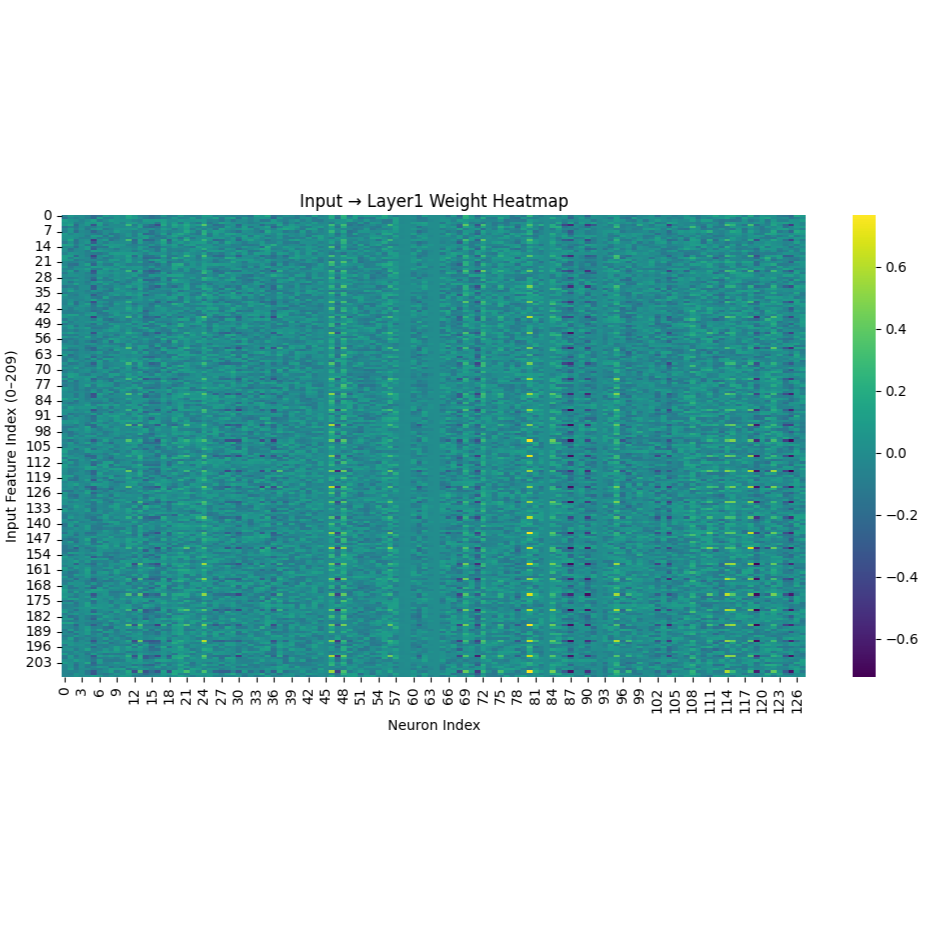

The machine learning component of AURA is responsible for interpreting sensor data and translating it into meaningful predictions about user activity and environmental context. It enables the system to move from reactive behavior to intelligent decision-making.

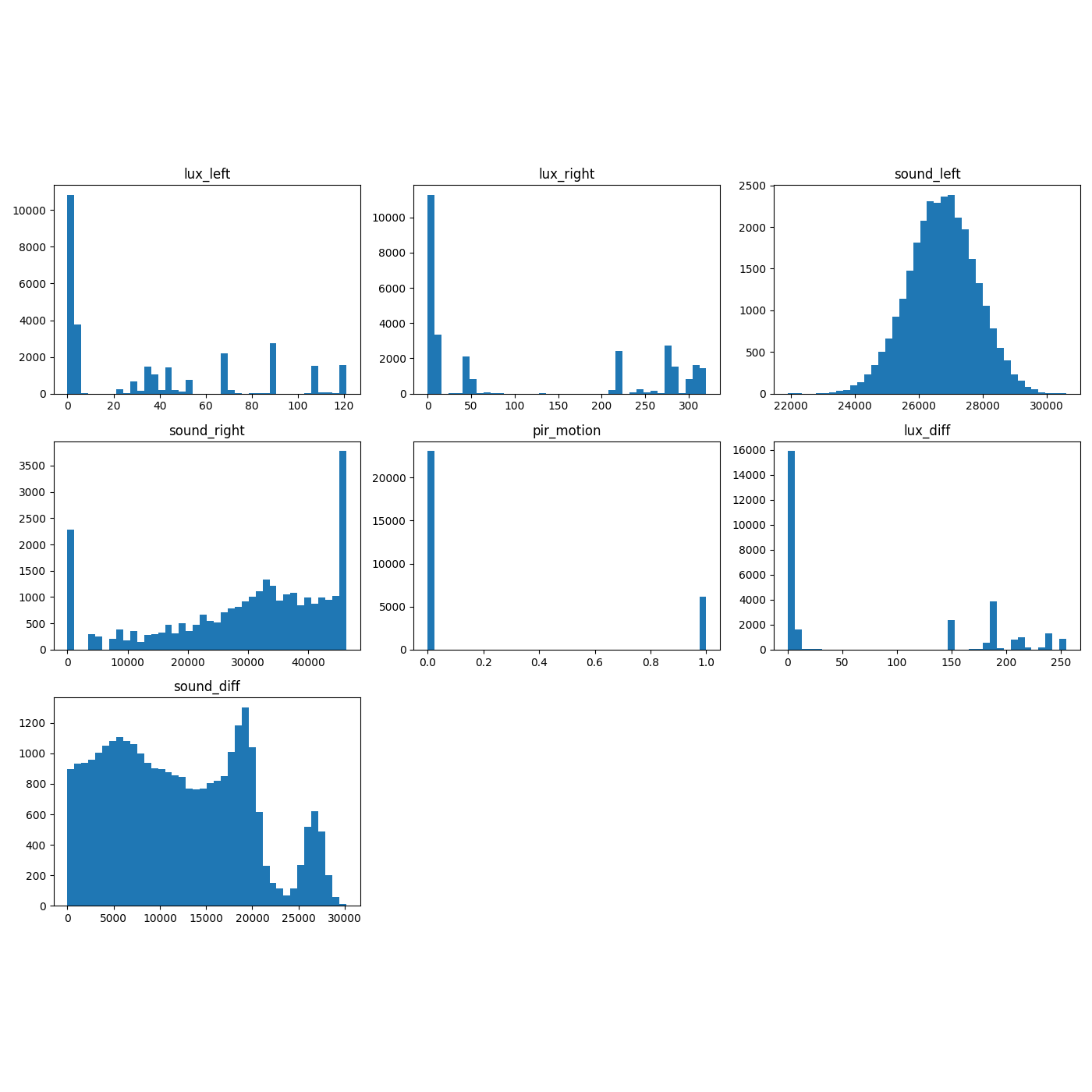

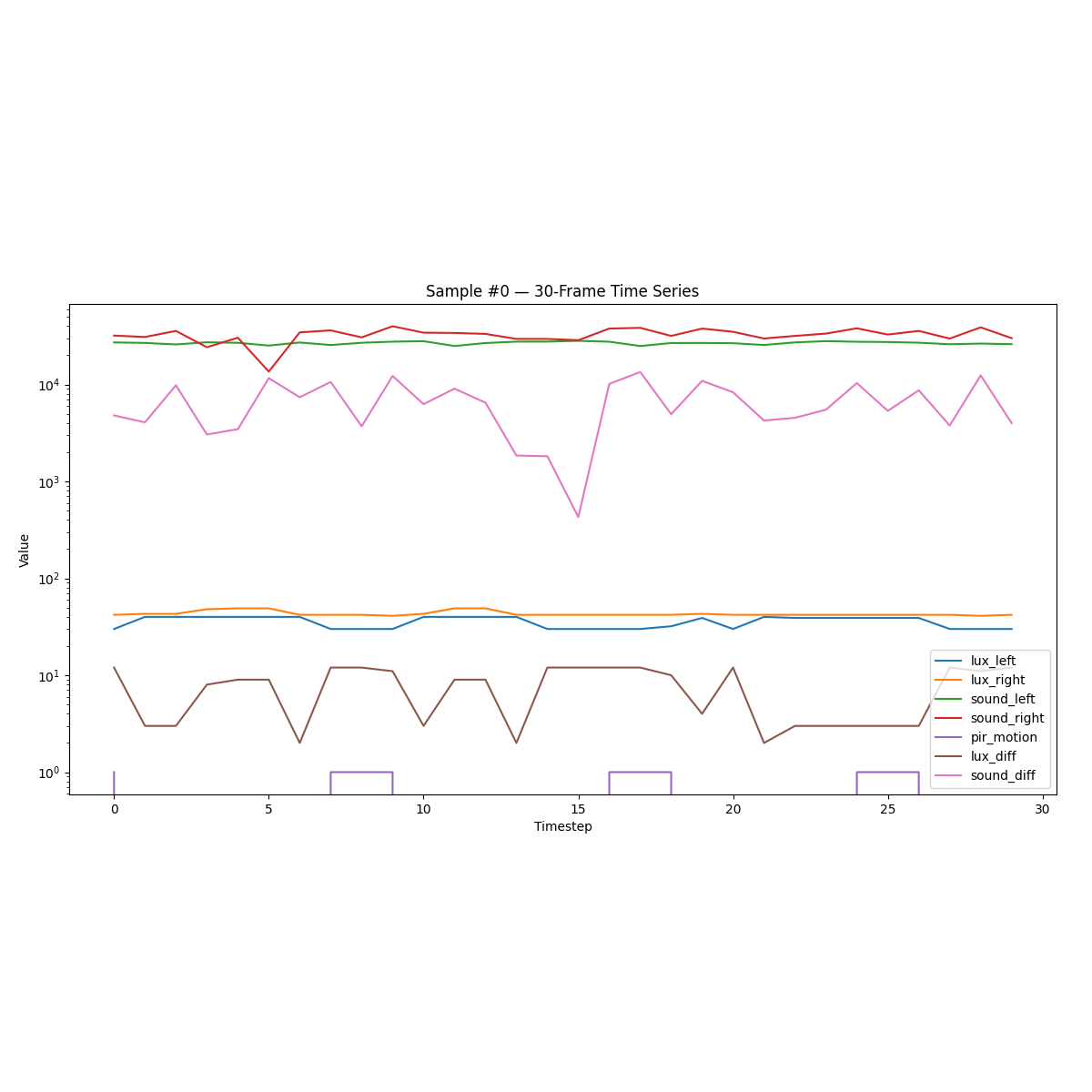

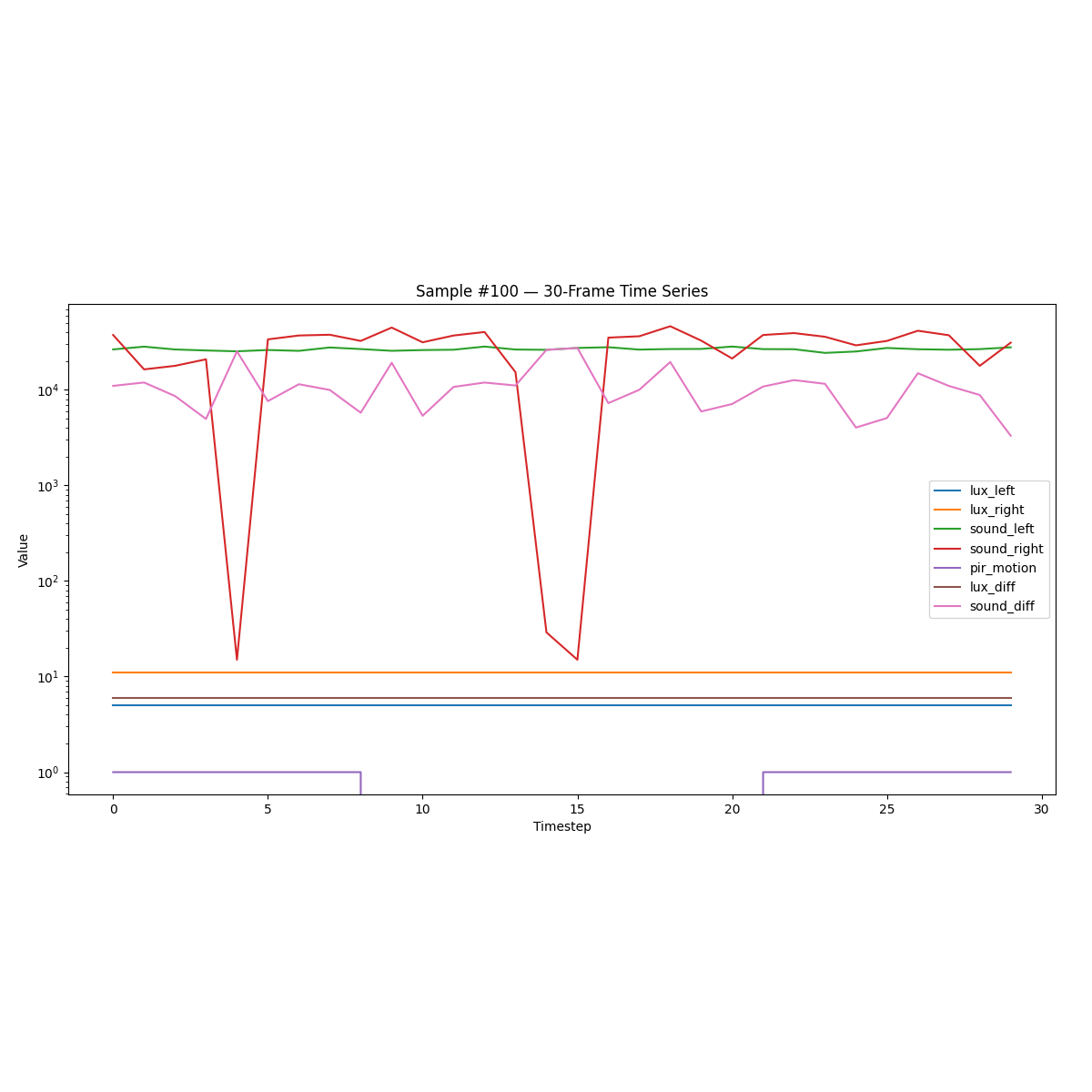

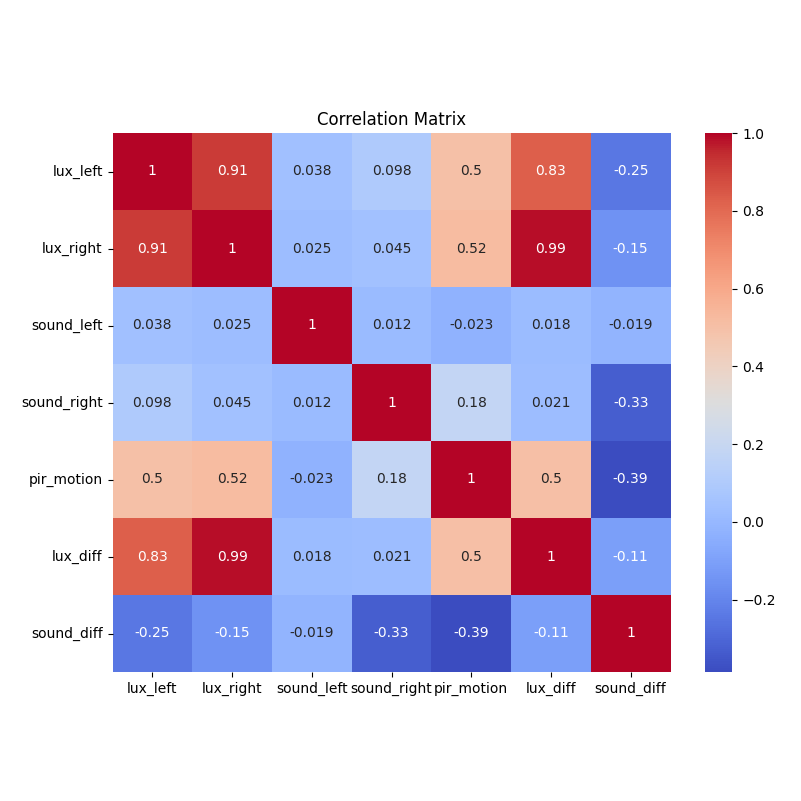

- Feature extraction from multi-sensor time-series data

- Supervised learning pipeline for activity classification

- Model evaluation, validation, and hyperparameter tuning

- Real-time inference for adaptive system response

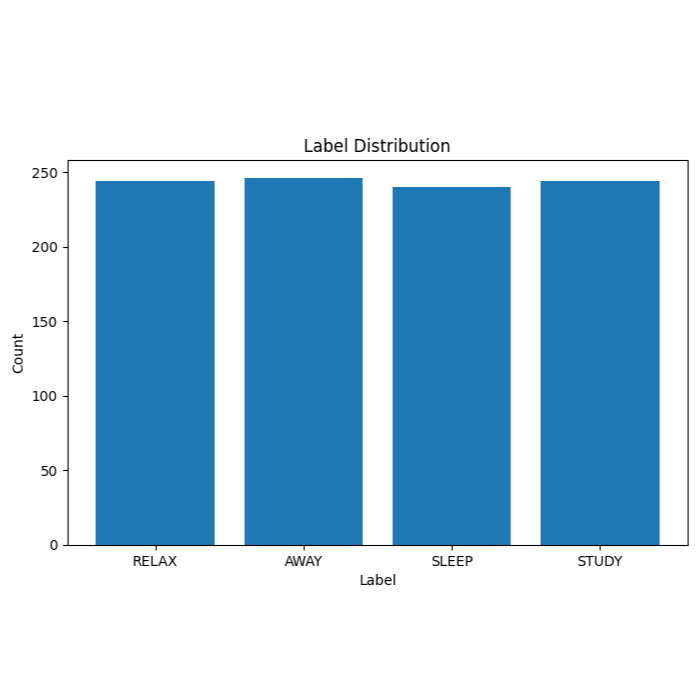

Sensor data is continuously collected and segmented into structured time-series windows, each representing short intervals of user activity. These segments are transformed into feature vectors that capture patterns in motion, sound, and brightness.

A neural network model is trained on this data to learn the relationship between sensor patterns and user states such as studying, resting, or being inactive. Through iterative training and evaluation, the model improves its ability to generalize across different conditions and behaviors.

During live operation, incoming sensor data is sent to the backend where the trained model performs inference. The resulting predictions allow AURA to adapt its behavior dynamically, aligning environmental conditions with the user’s current activity.

Distribution of labeled user activity states used for training the model

System Integration

AURA operates as a fully integrated AIoT system where hardware, software, and machine learning components work together in a continuous feedback loop. Each part of the system contributes to a cycle of sensing, learning, and adaptation.

The process begins at the device level, where environmental and behavioral data is captured in real time. This data is transmitted to the backend, where it is stored, processed, and used to train and refine machine learning models.

Once trained, these models are deployed to serve real-time predictions, enabling the system to interpret current conditions and determine appropriate responses. These predictions are then sent back to the device, influencing how it adjusts the environment.

Over time, this loop allows AURA to continuously improve. As more data is collected, the system becomes better at recognizing patterns, adapting to user preferences, and responding more intelligently to changing conditions.

This tight integration between sensing, learning, and action transforms AURA from a simple smart device into an adaptive system that evolves alongside its user.

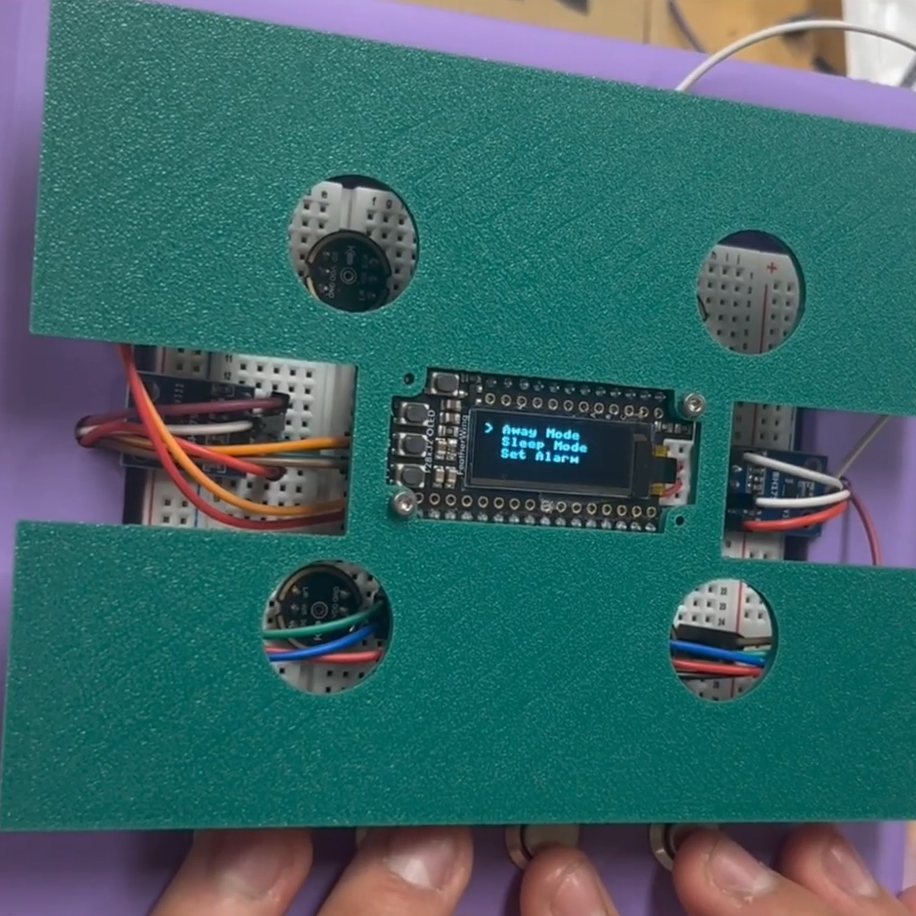

User interacting with the tactile button interface to navigate and control system functions